Artificial intelligence infrastructure is entering a new phase where scale speed and specialisation define competitive advantage.

Hyperscalers are no longer upgrading existing estates incrementally; instead, they are building super compute sites designed from inception to train and run large AI models at industrial scale.

Discussing Meta’s long-term compute strategy in a Facebook post, CEO Mark Zuckerberg said: “Meta is planning to build tens of gigawatts this decade, and hundreds of gigawatts or more over time.

“How we engineer, invest and partner to build this infrastructure will become a strategic advantage.”

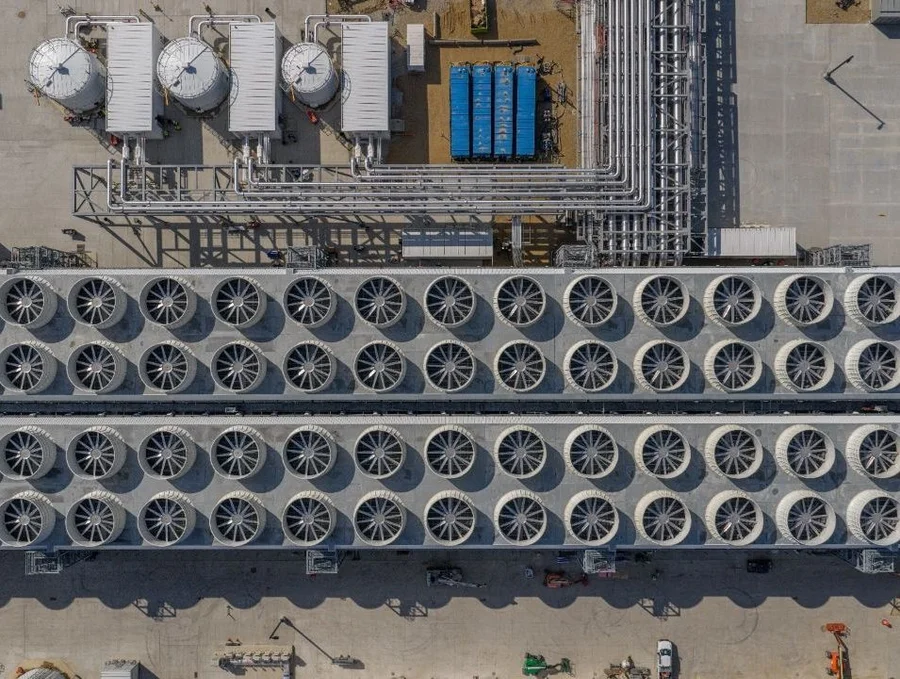

These facilities are optimised for AI workloads from day one. Power delivery cooling systems and network fabrics are engineered around GPU-dense racks rather than general purpose compute. The result is a new class of AI-first infrastructure that treats training and inference as core industrial processes.

NVIDIA Founder and CEO Jensen Huang captures this shift in thinking: “AI is now infrastructure, and this infrastructure, just like the internet, just like electricity, needs factories.

“These factories are essentially what we build today.”

From data centres to AI factories

The language used by AI leaders reflects a broader redefinition of what these sites represent. Rather than neutral compute hubs, they are increasingly framed as production environments that convert electricity and data into tokens models and services.

At Computex 2025, Jensen told attendees that “they’re not data centres of the past… they are, in fact, AI factories. You apply energy to it, and it produces something incredibly valuable, and these things are called tokens”.

This framing aligns with how cloud providers are planning their next decade of AI investment.

Facilities are being designed to support higher rack densities liquid cooling and rapid hardware refresh cycles to keep pace with GPU development.

Gigawatt campuses redraw the AI map

The most visible signal of this shift is the emergence of gigawatt-scale AI campuses. These sites concentrate vast amounts of compute into a small number of strategic locations.

In May 2025, the UAE announced plans for a 10-square-mile AI campus in Abu Dhabi designed to scale to around 5GW. The project positions the Gulf as a regional platform for US hyperscalers and a key node in global AI supply chains.

In the US, xAI is pursuing a similarly ambitious approach. A January 2026 update confirmed that the company’s Colossus complex in Memphis now targets 2GW of capacity with more than 555,000 NVIDIA GPUs installed.

This places it at roughly four times the power draw of the next largest dedicated AI training site and marks a decisive move towards single-site scale.

Hyperscalers compress time and space

Alongside scale, build speed has become a differentiator. Industry analysis suggests Microsoft aims to double its data centre footprint by 2027, adding around 4GW of new capacity across more than 70 regions.

To achieve this, the company is compressing traditional campus build cycles from around 24 months to as little as 12 to 15 months through modular design, repeatable templates and deep partnerships with utilities.

Specialist AI infrastructure providers are also playing a growing role.

In Europe, Nscale has signed a multi-year agreement to deliver approximately 12,600 NVIDIA GB300 GPUs for Microsoft at the Start Campus facility in Sines Portugal.

The company is also developing what it describes as the UK’s largest NVIDIA AI supercomputer at its Loughton AI Campus a 50MW site designed to scale to 90MW.

Rani Borkar, President of Azure Hardware Systems and Infrastructure at Microsoft, says: “Azure’s AI systems and data centres are built for the future of accelerated computing, enabling integration of NVIDIA latest generation GPUs across our expanding fleet of next gen AI superfactories.”

Risk concentration and resilience

As AI workloads consolidate into fewer larger sites questions around resilience energy sourcing and risk concentration become more acute. A single 2GW campus can draw as much power as more than a million homes and often depends on dedicated generation assets or multi-gigawatt grid connections.

These dependencies expose AI infrastructure to regulatory scrutiny, climate volatility and geopolitical tension in ways that smaller distributed estates did not.

Yet the direction of travel is clear. AI training and inference are increasingly anchored in highly-specialised facilities that blur the boundary between commercial infrastructure and assets of national importance.

At AWS re:Invent in December 2025, AWS CEO Matt Garman said: “Getting to a future of billions of agents, where every organisation is getting real-world value and results from AI, is going to require us to push the limits of what’s possible with the infrastructure.”

For AI leaders, the challenge is no longer whether to build at super compute scale but how to do so while maintaining reliability sustainability and trust as AI becomes embedded in every sector of the economy.