AMD has set out its vision for powering the next phase of artificial intelligence development through yotta-scale data centre infrastructure, announcing new platforms and accelerators at CES 2026 in Las Vegas.

During the show’s opening keynote, AMD Chair and CEO Dr Lisa Su outlined how the company plans to address the computational requirements of AI training and inference as global capacity undergoes rapid expansion. The announcements encompass rack-scale infrastructure, enterprise accelerators and next-generation GPUs – all targeting hyperscale and enterprise data centre environments.

“At CES, our partners joined us to show what’s possible when the industry comes together to bring AI everywhere, for everyone,” Lisa says. “As AI adoption accelerates, we are entering the era of yotta-scale computing, driven by unprecedented growth in both training and inference.

“AMD is building the compute foundation for this next phase of AI through end-to-end technology leadership, open platforms and deep co-innovation with partners across the ecosystem.”

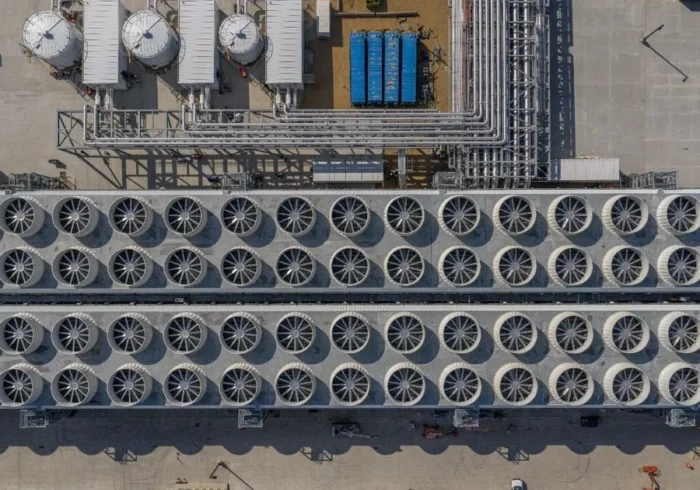

According to AMD, compute infrastructure is fundamental to AI’s expansion, noting that global capacity could grow from approximately 100 zettaflops today to more than 10 yottaflops within five years. Supporting this growth will require modular and open rack designs capable of evolving across multiple product generations whilst maintaining energy efficiency and bandwidth at scale.

Helios platform targets exascale performance

Central to AMD’s infrastructure strategy is the Helios rack-scale platform, which the company described as its blueprint for yotta-scale data centre architecture. AMD says Helios is designed to deliver up to three AI exaflops of performance in a single rack, targeting trillion-parameter model training and large-scale inference workloads.

The platform combines AMD Instinct MI455X GPUs with AMD EPYC Venice CPUs and AMD Pensando Vulcano NICs to enable high-speed scale-out networking. These components are unified through the AMD ROCm software ecosystem, reinforcing the company’s commitment to open platforms within data centre environments.

At CES, AMD offered an early look at Helios and unveiled the full AMD Instinct MI400 Series accelerator portfolio, whilst also previewing its next-generation MI500 Series GPUs.

MI400 and MI500 series accelerators

A key addition to the MI400 Series is the AMD Instinct MI440X GPU, designed specifically for on-premises enterprise AI deployments.

According to AMD, the MI440X targets scalable training, fine-tuning and inference workloads in a compact eight-GPU configuration that can integrate into existing data centre infrastructure.

The MI440X complements the recently announced MI430X GPUs, which focus on high-precision scientific computing, HPC and sovereign AI workloads. These accelerators are set to power major AI factory supercomputers globally, including Discovery at Oak Ridge National Laboratory in the US and the Alice Recoque system, France’s first exascale supercomputer.

AMD also shared further details on its Instinct MI500 Series GPUs, scheduled for launch in 2027. Built on the AMD CDNA 6 architecture, advanced 2nm process technology and HBM4E memory, AMD says the MI500 Series could deliver up to a 1,000x increase in AI performance compared with the MI300X GPUs introduced in 2023.

The company positioned the platform as delivering leadership performance across every level of the data centre stack.

Infrastructure supporting AI applications

While the announcements spanned from data centres to the edge, AMD emphasised the role of large-scale infrastructure in enabling AI innovation across industries.

During the keynote, partners including OpenAI, AstraZeneca, Illumina and Blue Origin highlighted how AMD-powered data centres are supporting breakthroughs in areas such as life sciences, generative AI and advanced research.

AMD also reinforced its involvement in the US government’s Genesis Mission, a public and private initiative aimed at securing long-term leadership in AI technologies.

Alongside its infrastructure roadmap, AMD announced a US$150m commitment to expand access to AI education, supporting efforts to bring AI into more classrooms and communities.

Together, these initiatives underline how AMD sees data centre innovation as central to both technological progress and broader societal impact in the AI era.